[ad_1]

How do people change into so skillful? Effectively, initially we aren’t, however from infancy, we uncover and apply more and more complicated expertise by self-supervised play. However this play isn’t random – the kid improvement literature means that infants use their prior expertise to conduct directed exploration of affordances like movability, suckability, graspability, and digestibility by interplay and sensory suggestions. Such a affordance directed exploration permits infants to study each what might be carried out in a given setting and how one can do it. Can we instantiate an identical technique in a robotic studying system?

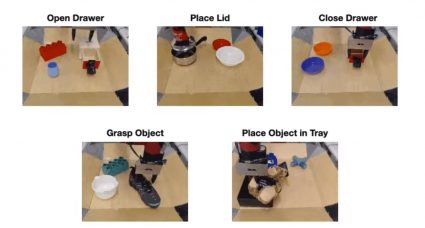

On the left we see movies from a previous dataset collected with a robotic conducting varied duties equivalent to drawer opening and shutting, in addition to greedy and relocating objects. On the best now we have a lid that the robotic has by no means seen earlier than. The robotic has been granted a brief time period to apply with the brand new object, after which it will likely be given a aim picture and tasked with making the scene match this picture. How can the robotic quickly study to control the setting and grasp this lid with none exterior supervision?

To take action, we face a number of challenges. When a robotic is dropped in a brand new setting, it should be capable to use its prior data to consider probably helpful behaviors that the setting affords. Then, the robotic has to have the ability to really apply these behaviors informatively. To now enhance itself within the new setting, the robotic should then be capable to consider its personal success one way or the other with out an externally supplied reward.

If we are able to overcome these challenges reliably, we open the door for a robust cycle during which our brokers use prior expertise to gather top quality interplay information, which then grows their prior expertise even additional, repeatedly enhancing their potential utility!

Our technique, Visuomotor Affordance Studying, or VAL, addresses these challenges. In VAL, we start by assuming entry to a previous dataset of robots demonstrating affordances in varied environments. From right here, VAL enters an offline section which makes use of this info to study 1) a generative mannequin for imagining helpful affordances in new environments, 2) a powerful offline coverage for efficient exploration of those affordances, and three) a self-evaluation metric for enhancing this coverage. Lastly, VAL is prepared for it’s on-line section. The agent is dropped in a brand new setting and may now use these discovered capabilities to conduct self-supervised finetuning. The entire framework is illustrated within the determine beneath. Subsequent, we are going to go deeper into the technical particulars of the offline and on-line section.

Given a previous dataset demonstrating the affordances of assorted environments, VAL digests this info in three offline steps: illustration studying to deal with excessive dimensional actual world information, affordance studying to allow self-supervised apply in unknown environments, and conduct studying to realize a excessive efficiency preliminary coverage which accelerates on-line studying effectivity.

1. First, VAL learns a low illustration of this information utilizing a Vector Quantized Variational Auto-encoder or VQVAE. This course of reduces our 48x48x3 pictures right into a 144 dimensional latent area.

Distances on this latent area are significant, paving the way in which for our essential mechanism of self-evaluating success. Given the present picture s and aim picture g, we encode each into the latent area, and threshold their distance to acquire a reward.

In a while, we will even use this illustration because the latent area for our coverage and Q perform.

2. Subsequent, VAL study an affordance mannequin by coaching a PixelCNN within the latent area to the study the distribution of reachable states conditioned on a picture from the setting. That is carried out by maximizing the chance of the info,$p(s_n | s_0)$. We use this affordance mannequin for directed exploration and for relabeling targets.

The affordance mannequin is illustrated within the determine proper. On the underside left of the determine, we see that the conditioning picture incorporates a pot, and the decoded latent targets on the higher proper present the lid in numerous places. These coherent targets will permit the robotic to carry out coherent exploration.

3. Final within the offline section, VAL should study behaviors from the offline information, which it could possibly then enhance upon later with further on-line, interactive information assortment.

To perform this, we prepare a aim conditioned coverage on the prior dataset utilizing Benefit Weighted Actor Critic, an algorithm particularly designed for coaching offline and being amenable to on-line fine-tuning.

Now, when VAL is positioned in an unseen setting, it makes use of its prior data to think about visible representations of helpful affordances, collects useful interplay information by making an attempt to attain these affordances, updates its parameters utilizing its self-evaluation metric, and repeats the method yet again.

On this actual instance, on the left we see the preliminary state of the setting, which affords opening the drawer in addition to different duties.

In step 1, the affordance mannequin samples a latent aim. By decoding the aim (utilizing the VQVAE decoder, which is rarely really used throughout RL as a result of we function solely within the latent area), we are able to see the affordance is to open a drawer.

In step 2, we roll out the skilled coverage with the sampled aim. We see it efficiently opens the drawer, the truth is going too far and pulling the drawer all the way in which out. However this offers extraordinarily helpful interplay for the RL algorithm to additional fine-tune on and excellent its coverage.

After on-line finetuning is full, we are able to now consider the robotic on its capability to attain the corresponding unseen aim pictures for every setting.

We consider our technique in 5 real-world take a look at environments, and assess VAL on its capability to attain a selected process the setting affords earlier than and after 5 minutes of unsupervised fine-tuning.

Every take a look at setting consists of at the very least one unseen interplay object, and two randomly sampled distractor objects. As an example, whereas there’s opening and shutting drawers within the coaching information, the brand new drawers have unseen handles.

In each case, we start with the offline skilled coverage, which solves the duty inconsistently. Then, we accumulate extra expertise utilizing our affordance mannequin to pattern targets. Lastly, we consider the fine-tuned coverage, which persistently solves the duty.

We discover that in every of those environments, VAL persistently demonstrates efficient zero-shot generalization after offline coaching, adopted by fast enchancment with its affordance-directed fine-tuning scheme. In the meantime, prior self-supervised strategies barely enhance upon poor zero-shot efficiency in these new environments. These thrilling outcomes illustrate the potential that approaches like VAL possess for enabling robots to efficiently function far past the restricted manufacturing unit setting during which they’re used to now.

Our dataset of two,500 top quality robotic interplay trajectories, overlaying 20 drawer handles, 20 pot handles, 60 toys, and 60 distractor objects, is now publicly accessible on our web site.

For additional evaluation, we run VAL in a procedurally generated, multi-task setting with visible and dynamic variation. Which objects are within the scene, their colours, and their positions are randomized per setting. The agent can use handles to open drawers, grasp objects to relocate them, press buttons to unlock compartments, and so forth.

The robotic is given a previous dataset spanning varied environments, and is evaluated on its capability to fine-tune on the next take a look at environments.

Once more, given a single off-policy dataset, our technique rapidly learns superior manipulation expertise together with greedy, drawer opening, re-positioning, and power utilization for a various set of novel objects.

The environments and algorithm code can be found; please see our code repository.

Like deep studying in domains equivalent to pc imaginative and prescient and pure language processing which have been pushed by giant datasets and generalization, robotics will seemingly require studying from the same scale of knowledge. Due to this, enhancements in offline reinforcement studying might be essential for enabling robots to reap the benefits of giant prior datasets. Moreover, these offline insurance policies will want both fast non-autonomous finetuning or solely autonomous finetuning for actual world deployment to be possible. Lastly, as soon as robots are working on their very own, we may have entry to a steady stream of recent information, stressing each the significance and worth of lifelong studying algorithms.

This put up relies on the paper “What Can I Do Right here? Studying New Expertise by Imagining Visible Affordances”, which was introduced on the Worldwide Convention on Robotics and Automation (ICRA), 2021. You’ll be able to see outcomes on our web site, and we offer code to breed our experiments.

tags: c-Analysis-Innovation

BAIR Weblog

is the official weblog of the Berkeley Synthetic Intelligence Analysis (BAIR) Lab.

BAIR Weblog

is the official weblog of the Berkeley Synthetic Intelligence Analysis (BAIR) Lab.

[ad_2]